Big Data and Mega Corpora in Middle East Studies

Charles Kurzman, “Big Data and Mega Corpora in Middle East Studies,” November 25, 2019

Charles Kurzman, “Big Data and Mega Corpora in Middle East Studies,” November 25, 2019

The humanities and social sciences don’t necessarily cross paths in the field of Middle East studies. At conferences, they attend separate panels and huddle in separate clusters for informal conversation. Increasingly, though, a subset of scholars across the humanities and social sciences is using the same set of research tools: text-analysis software that falls under the rubric of both “digital humanities” and “computational social science.”

This software makes use of a distinctively new source material of our era, the massive amount of text that is either born digital, like websites and social media, or has been scanned from paper into digital form. The field of Middle East studies, like other areas of scholarship, now has millions of pages (to use a paper-based measure) at its fingertips, allowing new sorts of research questions to be addressed. Although the humanities and social sciences may take up different questions, they share a common set of challenges in the assembly and processing of massive amounts of text – big data, as it is known in the social sciences, or mega corpora, as it is sometimes called in the humanities.

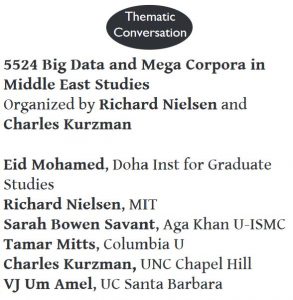

A “thematic conversation” at the recent annual conference of the Middle East Studies Association – “Big Data and Mega Corpora in Middle East Studies” – brought these two sets of scholars into conversation, many for the first time. Organized by a political scientist (Rich Nielsen of MIT) and a sociologist (myself), the session featured presentations by three scholars from distinct fields (a fourth presenter was unable to attend). All of them offered snapshots of the work that they are doing and the tools that they use for this work:

Eid Mohamed, a specialist in cultural studies at the Doha Institute for Graduate Studies, analyzed three Egyptian periodicals whose print runs spanned the first half of the 20th century. Now in digital form, these periodicals allow Professor Mohamed and his colleagues to track the prevalence of keywords such as hurriya (freedom) over decades, demonstrating how many of the concepts that animated the uprisings of the “Arab Spring” have a long provenance dating back more than a century. Professor Mohamed described his collaboration with computer scientists who work in the field of natural language processing to stem keywords – that is, to identify the root of a word from its many grammatical forms – and the potential for future work that may automate the analysis of sentence structures in Arabic.

Sarah Savant, a historian at the Aga Khan University, described the Kitab Project, a multinational collaboration that has amassed more than 3,000 Arabic and Persian texts from the 8th through the 15th century of the Common Era. Professor Savant and colleagues are focusing on intertextuality – the re-appearance of phrases or five or more words in multiple texts. The project has identified thousands of these re-occurrences, suggesting that many writings of this era quote routinely from earlier texts. Some of these re-occurrences involve phrases from the Qur’an, hadiths, isnads, and siras, and the project’s ongoing work seeks to identify and separate out these sources in order to examine the prevalence of other forms of intertextuality in the Islamic tradition.

Tamar Mitts, a political scientist at Columbia University, analyzed responses to 1,500 videos distributed by the self-described Islamic State on Twitter in 2015-2016. So they wouldn’t have to watch the videos themselves, Professor Mitts and colleagues used commercial software to analyze the content of the videos and identify whether or not they included depictions of violence against persons and property. Violence against persons decreased the likelihood that the video would be shared on Twitter, except among Twitter accounts who were already most predisposed to favor the Islamic State. Users who were exposed to nonviolent videos emphasizing the ideology and achievements of the Islamic State, by contrast, tweeted more pro-Islamic State messages than similar users who did not see these videos.

These three projects covered different time periods (8th-15th centuries, 20th century, and 21st century) and different forms of text (books, periodicals, and Twitter posts, including videos), with different goals (tracking themes over time, exploring intertextuality, and estimating the effects of texts on audiences). Nevertheless, conversation at the session and afterward – participants were split roughly evenly between humanities and social sciences – noted a variety of commonalities.

One of the issues that arose was the question of how to amass a systematic collection of texts on any given research question. In the study of the Middle East, texts may not be widely available in print form, and it can be hard to know what texts are missing from a digitized collection because many libraries in the region do not have online catalogs. Texts may be removed from circulation by government fiat, by civil disorder, by Twitter take-downs, and for many other reasons, leaving researchers with incomplete samples of texts to study. For texts published in the past century, copyright restrictions, proprietary databases, and the sheer volume of publications may prevent digitization of representative samples and limit the reliability of analyses based on the available texts. Still, as digitization continues at a rapid pace, scholars in the humanities and social sciences stand to benefit from the investments of libraries, tech companies, and individuals who are committed to the digitization of our historical legacy.

Another issue involved the quality and pace of optical character recognition (OCR), the process of turning scanned pages into searchable text. The Arabic language and Arabic-derived scripts present especially difficult problems for OCR, in part because of differences in typefaces. (For an overview of some of these challenges, see Mansoor Alghamdi and William Teahan’s 2018 paper and Maxim Romanov et al.’s 2017 paper on this subject.) These problems have only been partially solved, as yet, and it still takes a fair amount of human labor – and time – to train the software and check the output in order to achieve confidence-inspiring levels of accuracy.

Another issue involved stemming – identifying multiple forms of the same root word. In English and some other languages, stemming is relatively simple – removed -s from plurals, remove -ed from past tense, and so on, although there are tricky aspects in every language (such as plurals that don’t end in -s and words that end in -ed but are not verbs in the past tense). Arabic words are particularly difficult to stem. (For an overview, see Mohammed Mustafa et al.’s recent paper.) Until a reliable system is developed for stemming, analyses of Arabic texts may count two different forms of the same word as separate words. For some research subjects, this may not matter, but for others – such as sentiment analysis or the automated identification of subjects and verbs – stemming may be crucial.

A further set of issues involved the control of texts, including considerations of privacy and intellectual property. Academics tend to favor open-source approaches to texts (at least other people’s texts), but participants at the session also voiced concerns over the rights of authors and publishers, who may wish to be compensated for the use of their materials, and the rights of research subjects, who may not want their social media posts and other information to be aggregated and stored in collections that could potentially expose them to risk.

A final set of issues involved the work process involved in the study of huge numbers of texts. This process may involve:

- Collecting digital texts, whether via scanning or scraping (large-scale copying of material from websites) or API’s (application program interfaces, which allow requests for data from Twitter, Google, and many other online services).

- Processing digital texts, whether via optical character recognition or natural language processing or other mechanisms.

- Analyzing digital texts, whether via comparisons of phrases across texts, or frequency counts across time, or statistical inferences.

Conversation at the session revealed that many scholars in these fields are conducting a considerable portion of this work in the same open-source software package: R. This package is free, runs on almost any operating system, and is becoming a lingua franca for scholars working on big data and mega corpora. However, it takes some time to learn, and so do the endless variety of add-on packages (also free), each of which may be helpful for particular tasks that researchers may want to undertake.

In order to promote these skills, session participants suggested and endorsed a proposal to hold an R training workshop in conjunction with the 2020 meeting of the Middle East Studies Association in Washington, DC.. This workshop may take the format of a half-day event with multiple tracks, such as an introductory track for people who would like to learn what they could do with R and how to get started – this track would involve installing R, loading a set of texts, and developing basic analyses. Other tracks would address more specialized skills, with experienced scholars sharing their know-how with scholars who already have a basic understanding of R.

The session considered creating a listserv for scholars in Middle East studies to stay in touch with one another and plan future collaborations, including the proposed workshop at MESA. Fortuitously, but not surprisingly, one of the participants at the session noted that such a listserv already exists: the Islamicate Digital Humanities Network. While the focus of this network has been scholars in the humanities, the network is enthusiastic to include the computational social sciences as well. Contact information is available on the network’s website.

This new era of digital information is a research bonanza, but it also presents a steep learning curve, especially for those of us trained in earlier eras. Hiking up this learning curve may be easier and more pleasant when undertaken together.